Deep Fashion3D: A Dataset and Benchmark for 3D Garment Reconstruction from Single Images

Heming Zhu 1,2,3 † , Yu Cao1,2,4 † , Hang Jin1,2 †, Weikai Chen 5, Dong Du6, Zhangye Wang 3,

Shuguang Cui 1,2, Xiaoguang Han1,2,*

*Corresponding email: hanxiaoguang@cuhk.edu.cn

† Joint first authors

1 The Chinese University of Hong Kong, Shenzhen

2 Shenzhen Research Institute of Big Data

3 State Key Lab of CAD&CG, Zhejiang University

4 Xidian University

5 Tencent America

6 University of Science and Technology of China

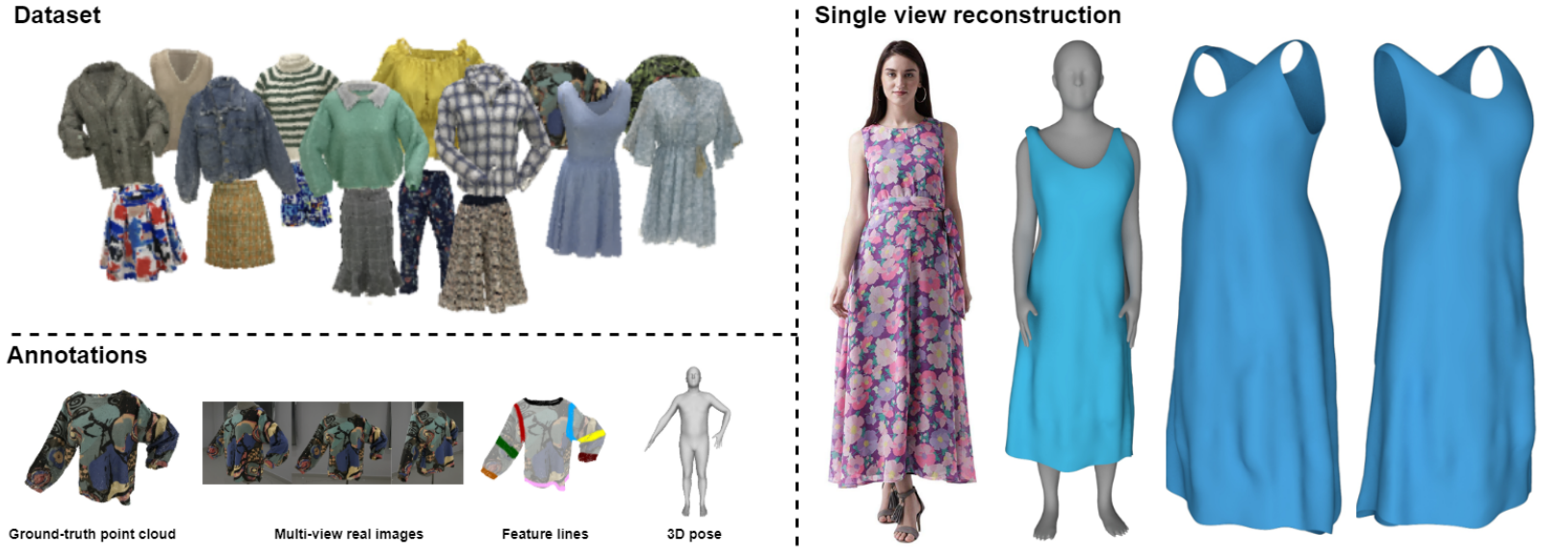

Figure 1: We present Deep Fashion3D, a large-scale repository of 3D clothing models reconstructed from real garments. It contains over 2000 3D garment models, spanning 10 different cloth categories. Each model is richly labeld with groundtruth point cloud, multi-view real images, 3D body pose and a novel annotation named feature lines. With Deep Fashion3D, inferring the garment geometry from a single image becomes possible.

News

2023-6-25 Deep Fashion3D V2 is available, where the dense garment point clouds are equiped with more accurate feature line annotation, registered mesh with category-specific triangulation and high-resolution texture maps! Click Here to browse the release note.Overview

High-fidelity clothing reconstruction is the key to achieving photorealism in a wide range of applications including human digitization, virtual try-on, etc. Recent advances in learning-based approaches have accomplished unprecedented accuracy in recovering unclothed human shape and pose from single images. In contrast, modeling and recovering clothed human and 3D garments remains notoriously difficult, mostly due to the lack of large-scale clothing models available for the research community. To fill this gap, we present Deep Fashion3D, a large-scale repository of 3D clothing models reconstructed from real garments. It contains over 2000 3D garment models, spanning 10 different cloth categories. Each model is richly labeld with groundtruth point cloud, multi-view real images, 3D body pose and a novel annotation named feature lines. With Deep Fashion3D, inferring the garment geometry from a single image becomes possible. To demonstrate the advantage of Deep Fashion3D, we propose a novel baseline approach for single-view garment reconstruction, which leverages the merits of both mesh and implicit representations. A novel adaptable template is proposed to enable the learning of all types of clothing in a single network. Extensive experiments have been conducted on the proposed dataset to verify its significance and usefulness. Dataset will be publicly available soon.Download

Citation

If you use Deep Fashion3D in your work, please consider citing our paper!1 | @inproceedings{zhu2020deep, |

Dataset Discription

We contribute to Deep Fashion3D dataset, the largest collection to date of 3D garment models. The dataset proposed has serveral appealing features:- Firstly, Deep Fashion3D contains 2078 models reconstructed from real garments, which covers 10 different categories and 563 garment instances. To the best of our knowledge, Deep Fashion3D covers much more garment categories than other publicly available datasets specialized for 3D garments.

- Secondly, Deep Fashion3D contains rich annotations including 3D feature lines, 3D body pose and the corresponded caliborated multi-view real images. It is worth to mention that Deep Fashion3D is the first dataset which presents feature line annotation tailored for 3D graments, which can provide strong priors for garment reasoning related tasks, e.g., 3D garment reconstruction, classification, retrieval, etc.

- Thirdly, each garment in Deep Fashion3D is randomly posed to enhance the variety of real clothing.

Dataset Statistics

Table 1 shows the statistics of each clothing categories of Deep Fashion3D. Deep Fashion3D contains 2078 models reconstructed from real garments, which covers 10 different categories.

Table 1: Statistics of the each clothing categories of Deep Fashion3D.

| Type | Number | Type | Number |

|---|---|---|---|

| Long-sleeve coat | 157 | Long-sleeve dress | 18 |

| Short-sleeve coat | 98 | Short-sleeve dress | 34 |

| None-sleeve coat | 35 | None-sleeve dress | 32 |

| Long trousers | 29 | Long skirt | 104 |

| Short trousers | 44 | Short skirt | 48 |

Data Examples

Figure 2 shows data examples for each type of garments from Deep Fashion3D dataset. All of the models in Deep Fashion3D dataset are reconstructed from real garments.Figure 2: Example garment models of Deep Fashion3D.

Annotation Discription

Figure 3 demonstrated a quick skim through the annotations of Deep Fashion3D dataset. To facilitate future research on 3D garment reasoning and resconstruction task, we provide rich annotations along with the Deep Fashion3D dataset, which consists of the following components:

- Feature line annotation: A novel feature line annotation tailored for 3D gamrment is proposed in Deep Fashion3D dataset. Akin to facial landmarks, the feature lines denote the most prominent features of interest, e.g. the open boundaries, the neckline, cuff, waist, etc, that could provide strong priors for a faithful reconstruction of 3D garment.

- Calibrated multi-view real images: For each 3D clothing model, we provide corresponding caliborated multi-view real images, which are critical to generalizing the trained model to images in the wild.

- 3D pose: For each 3D clothing model, we label it which 3D pose represented by SMPL coefficients. Due to the highly coupled nature between human body and clothing, we believe the labeled 3D pose could be beneficial to infer the global shape and pose-dependent deformations of the garment model.

For more details about the dataset, please refer to dataset download page.

Figure 3: A quick skim through the annotations of Deep Fashion3D dataset.

Experimental Results

Benchmarking on Single-view Reconstruction

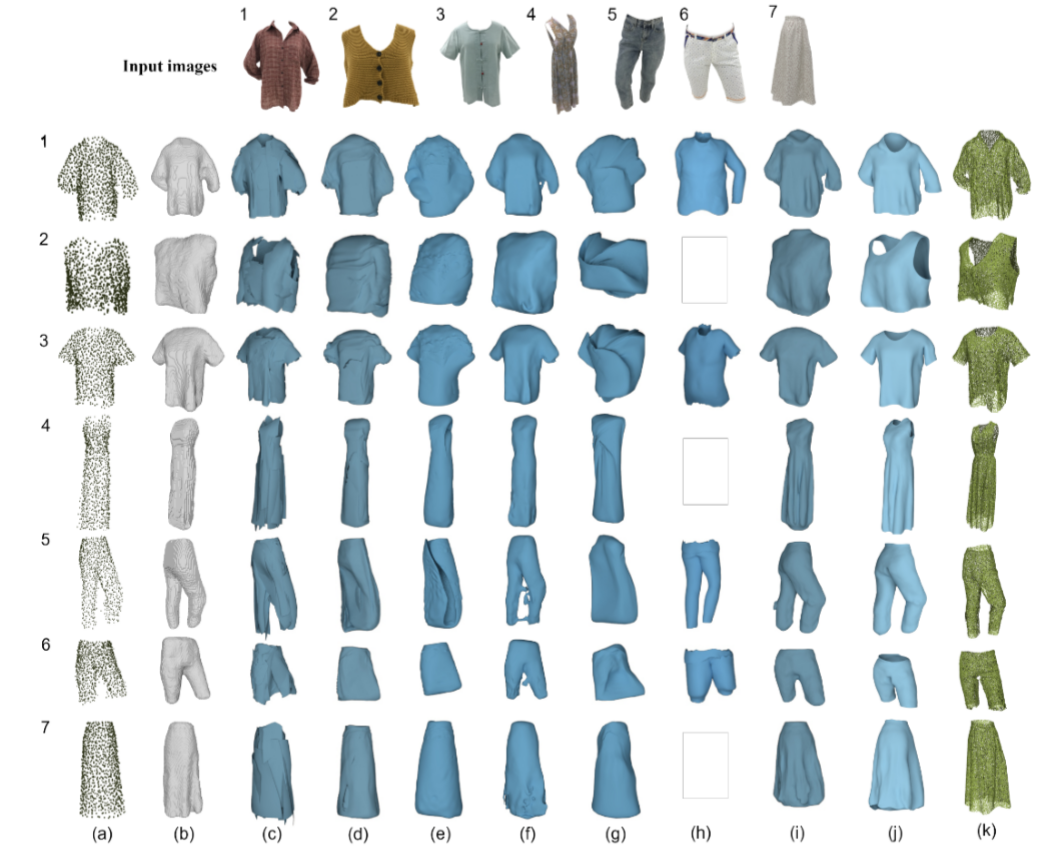

Figure 4 shows the experiment results against other methods. Given an image, results are followed with (a) PSG (Point Set Generation); (b) 3D-R2N2; (c) AtlasNet with 25 square patches; (d) AtlasNet with a sphere template; (e) Pixel2Mesh; (f) MVD (multi-view depth generation); (g) TMN (topology modification network); (h) MGN (Multi-Garment Network); (i) OccNet; (j) Ours; (k) The groundtruth point clouds. The null images in the figure means that the method fails to generate a result.Figure 4: Experiment results against other methods.

Results on In-the-wild Images

Figure 5 shows the results generated by our baseline approach trained on our Deep Fashion3D dataset. Note that all the images adopted for testing are in-the-wild images from the Internet, which are not seen during training.Figure 5: Reconstruction from in-the-wild images using our approach.